CC BY 4.0 (除特别声明或转载文章外)

如果这篇博客帮助到你,可以请我喝一杯咖啡~

Node Embeddings

start=>start: Input Graph

info=>operation: Structured Features

setCache=>operation: Learning Algorithm

end=>end: Prediction

start->info->setCache->end

Input Graph –> feature enginearing now we abrod that Automatically learn

Graph Representation Learning

Goal: Efficient task -independent feature learning for machine learning with graphs

Why Embedding

Task:map nodes into an embedding space

Setup

Shallow Encoding encoder is just an embedding-lookup

Framework Summary

Encoder + Decoder Framework - Shallow encoder:embedding looup - Parameters to optimize: Z which contains node embeddings zu for all nodes u <V

- Decoder : based on node similarity

- Objective: maximize ZvT Zu for node pairs(u,v) that are similar

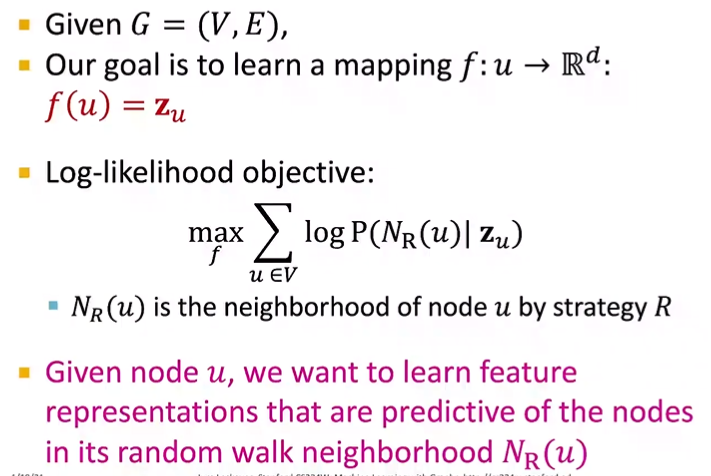

Random walk Approaches for Node Embeddings

- Vector Zu:

-

Probability P(v Zu): - softmax

- sigmoid

Random Walk Embeddings

Unsupervised Feature Learning

Intuition:

- Find embeddding of nodes in d-dimensional space that preserves similarity idea:

- Learn node embedding such that nearby nodes are close together in the network Given a node u , how do we define nearby nodes?

Feature Learning as Optimization

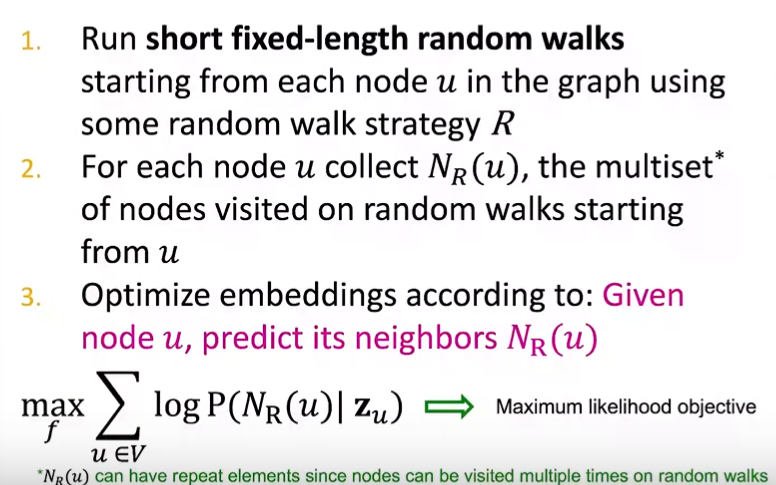

Random Walk Optimization

Overview of node2vec

Goal: Embed node with similar network neighborhoods close in the feature space Key observation: Flexible notion of network neighborhood Nr of node u leads to rich node embeddings.

Embedding Entire Graph

Summary

3 ideas to graph embeddings Approach1: embed nodes and sum/avg them Approcah2: Create super-node that spans the (sub) graph and then embed that node Approach3: Annoymous Walk embeddings Idea1: sample the anon. Idea2: Embed annoymous walks

How to use embeddings zi of nodes:

- Clustering / community detection

- Node classification

- Link prediction

- Graph classification